Agentic Video Editing: What It Is and Why It Changes Everything About Long-Form Workflow

Agentic video editing uses AI agents to make autonomous editorial decisions, not just automate clicks. Here's what it means and how Selects does it.

TLDR: Agentic video editing is AI that autonomously handles the entire pre-editing workflow, from raw footage to a structured, ready-to-edit timeline, so your team can skip the prep and start creating.

Most AI video tools do what you tell them. Agentic AI decides what to do.

That distinction sounds small. It isn't. It's the difference between a tool that saves you a few clicks and one that can take a pile of raw footage and hand you back a structured, editable timeline, without you touching a thing. It's the core promise of autonomous video editing, and it's already inside the workflows of the fastest-moving production teams.

Agentic video editing is still early. But it's already changing how the fastest-moving video teams work. This post breaks down what it means, how it's different from every AI feature you've used before, and what it looks like inside a real editing workflow.

Agentic AI Explained: What "Agentic" Actually Means

The word comes from AI research. An agent is an AI system that can perceive its environment, make decisions, take actions, and adjust based on the outcome, all without a human directing each step.

An agentic AI isn't just executing instructions. It's pursuing a goal.

In the context of video editing, that goal might be: take this raw footage and give me a clean, organized starting point for my edit. An agentic system doesn't need you to specify every step to get there. It figures out the path.

A16Z, whose recent piece on the future of agentic video editing lays out why this moment matters, frames it this way: traditional software is reactive; it responds to commands. Agentic AI is proactive; it works toward outcomes.

This is the foundation of agentic AI. It creates a system that understands the goal and figures out how to get there. That shift matters enormously for video, where so much of the work before the creative edit is mechanical, repetitive, and deeply time-consuming. The majority of editing time is spent thinking about the process over the final product.

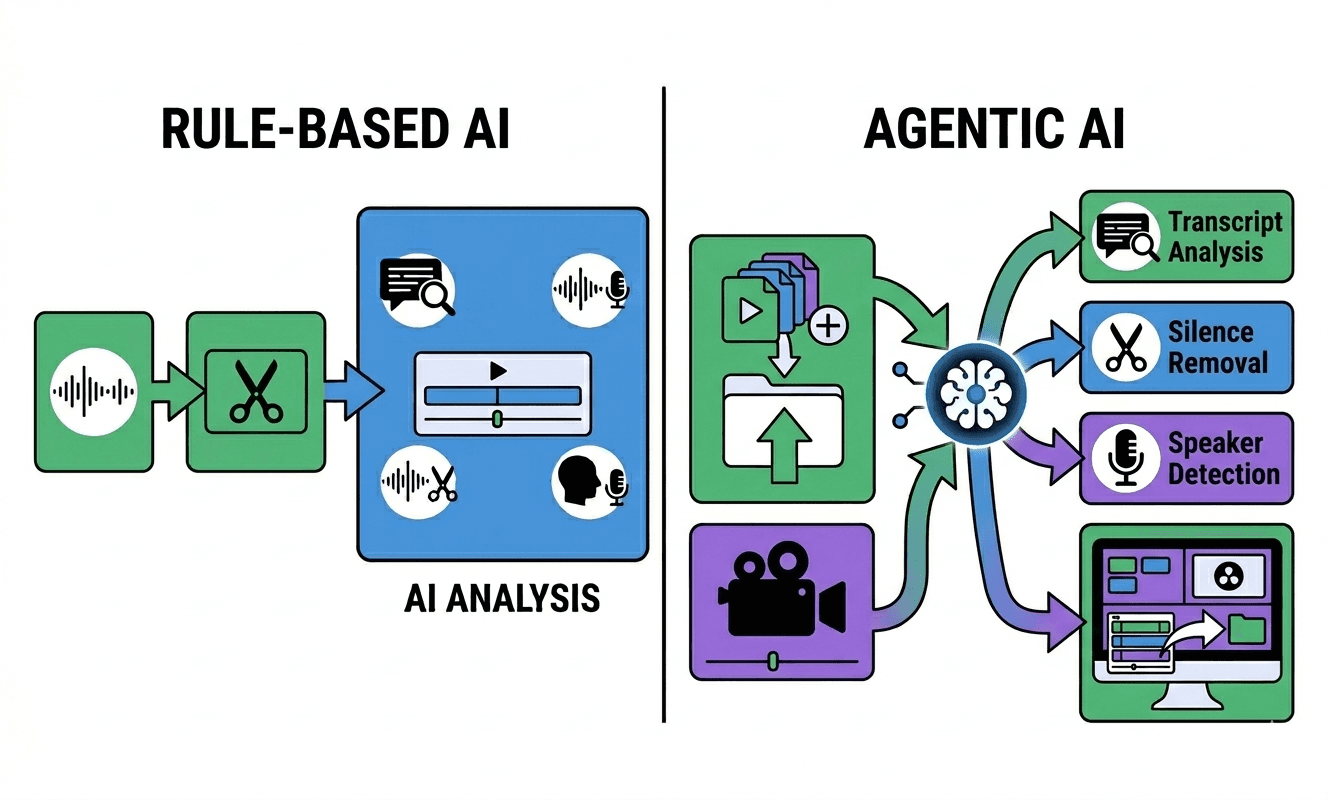

Rule-Based AI vs. Agentic AI: Why the Difference Matters for Video Production Teams

Most AI in video tools today is rule-based. That means it follows a fixed logic tree: if silence exceeds X milliseconds, remove it. If this word appears in the transcript, flag it. Useful. But not intelligent. Here's the practical difference:

Rule-based AI (what most tools do today)

Remove silence above a threshold you set

Add captions based on transcription

Apply a preset color grade

Trim to a specific length

Agentic AI (what's actually new)

Analyse hours of raw footage and determine what matters

Decide which camera angle serves the moment best

Build a coherent narrative structure from unstructured footage

Hand off a complete, organized timeline to your NLE, ready to edit

The gap between these two approaches is the gap between automating a task and automating a workflow. Most tools give you the former. Long-form video editing automation requires the latter, because the prep stage is more than one action.

In recent workflows, that three-layer division is now concrete. Hyperframes, Remotion, and Selects each cover one axis of the pipeline, and Claude orchestrates across all three.

For the full data on where Claude alone breaks down as a standalone video editor, and why the brain/hands split matters, the 30-minute experiment write-up covers every failure mode with measured results.

Rule-based AI automates individual actions, whilst agentic AI automates a workflow.

This is also what separates agentic editing from vibe editing, the emerging practice of directing AI with intent and feel rather than precise instructions. Vibe editing is a style of interaction. Agentic AI is the underlying capability that makes that interaction possible at scale.

What Agentic Video Editing Looks Like in Practice

The clearest working example right now is Selects by Cutback, a standalone AI pre-editing tool built specifically for long-form video. It's the closest thing that exists to replacing the role of an assistant editor with AI.

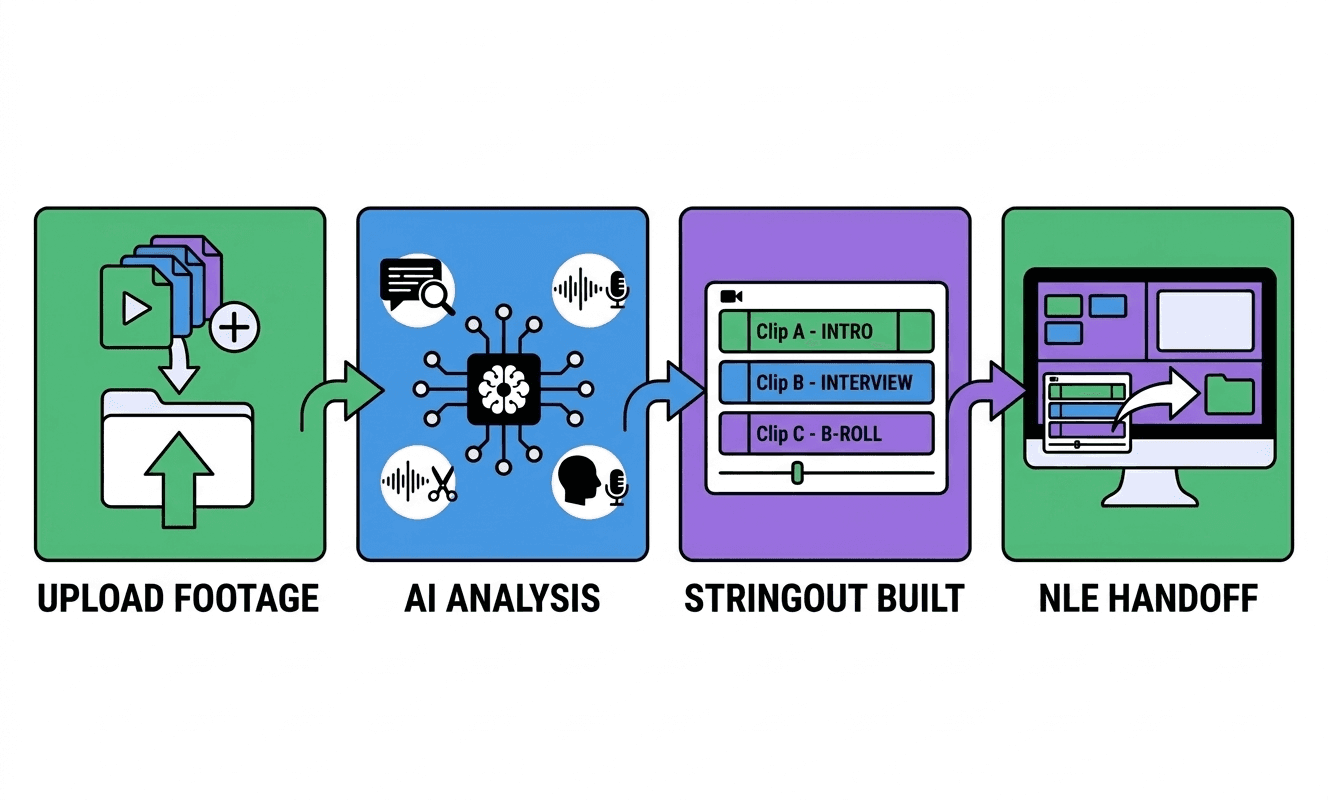

Here's the workflow:

1. Upload raw footage - Drop in your raw files, single cam or multi-cam, with external audio if needed. Selects ingests everything.

2. Automated analysis - Selects transcribes the footage, detects speakers, identifies silences and filler words, and organises content into topic-based chapters. It's not waiting for you to tell it what to look for.

3. Stringout and automated rough cut - Based on that analysis, Selects builds a stringout, a structured sequence of usable material. Silences and bad takes are removed. Camera angles are switched based on the active speaker.

4. Storyline editing - From this baseline, you can prompt Selects to create storylines with a chat-based prompt to create a rough cut of the narrative you're looking for. This can be done for short-form videos too, great for repurposing content and clipping.

5. NLE handoff - After AI footage analysis and rearranging the timeline, the resulting timeline exports directly to Adobe Premiere Pro, Final Cut Pro, or DaVinci Resolve. Color-coded, labeled, with markers and transcripts included. You open your NLE and start halfway through the edit, not at the beginning.

The whole prep stage, the part that used to take an assistant editor hours, happens in minutes. And critically, you didn't specify each step. You gave the system a goal, and it executed.

That's agentic behaviour.

If you're evaluating Selects against other tools in the market, the Selects vs Descript comparison breaks down exactly how the two differ at the workflow level.

Why Existing Utility AI Tools Fall Short In Comparison To Agentic AI Tools

It's worth being specific here, because "AI video editing" has become a catch-all phrase applied to tools with very different capabilities.

ChatGPT

ChatGPT is a large language model. It can help you write a script, plan a structure, or draft a shot list. It cannot ingest video files, analyze footage, or make editorial decisions about what to cut. Using it for video editing means using it adjacent to the edit, not inside it. We go deeper on this in ChatGPT for video editing: what it can and can't do.

CapCut

CapCut offers smart features, including auto-captions, reframing, and one-click highlights. These are useful for short-form, but they're automation presets. The AI isn't analyzing your footage and making contextual decisions. It's applying templates.

Adobe Premiere's AI features

Adobe Premiere's AI features (Generative Extend, auto-captions, Speech to Text) are genuinely valuable inside an established workflow. But they operate on footage you've already imported and cut. They don't handle the pre-editing layer, the hours of prep before the creative work starts.

The pattern across all of these tools is the same: they operate on footage that's already been imported, trimmed, and placed on a timeline. None of them solves the video editing bottleneck.

For a full walkthrough of what happens when you try to use ChatGPT as a video editor at every stage of production, we tested the complete LLM video editing workflow, including where Claude and Gemini hit the same wall.

Chat-based editing gets closer; it lets you interact with your footage through natural language inside the NLE. But it still requires the footage to already be in a timeline. The problem agentic AI solves is upstream of that.

The gap none of these tools fills: taking raw, unorganized footage and autonomously producing a structured, and creating an editable starting point. That's the AI pre-editing layer done by tools like Selects. It's the reason automated NLE handoff is becoming a baseline expectation for high-output video production teams.

Who Benefits Most From Autonomous Video Editing AI?

Agentic pre-editing has a specific ICP. It's not for someone editing a five-minute YouTube video on their laptop. It's for anyone managing volume, where the mechanical work before the edit is a real operational constraint.

YouTube studios running regular long-form content, interviews, docuseries, and commentary, where every new project starts with hours of unusable raw footage. The prep bottleneck is a recurring cost. Agentic pre-editing compresses it from hours to minutes, every single time.

Podcast agencies handling multiple clients, multiple recordings per week, often with multi-cam setups and external audio. The sync-and-label stage alone is a significant chunk of billable time. An agentic tool that handles it automatically changes the economics of each project.

In-house content teams at brands, media companies, and startups, where one person is often doing three jobs. When you're editor, producer, and strategist simultaneously, anything that removes mechanical prep from your plate multiplies what you can actually ship.

For a broader look at how these teams are incorporating AI into their full workflow, the AI video editing guide for 2026 covers the full landscape.

What's Coming Next

Agentic video editing is early. What exists now handles the pre-editing layer well, ingestion, analysis, structure, and handoff. The next frontier is the edit itself.

Expect agentic systems to start making higher-level narrative decisions: identifying the strongest moments in an interview, restructuring a conversation for better flow, and generating multiple cut variations for A/B testing. The editor stays in control but starts further ahead.

The question for video teams isn't whether agentic AI will change how long-form content gets made. It already is. The question is whether your workflow is set up to take advantage of it when it becomes the standard.

Try Selects today to have your first agentic video editing experience. For more in-depth knowledge about the ins and outs of video editing, check out our latest posts on the Cutback blog or our YouTube channel.

Frequently Asked Questions (FAQ)

Q: What is agentic video editing?

A: Agentic video editing refers to AI systems that can autonomously analyze raw footage, make editorial decisions, and produce a structured, editable timeline, without requiring manual direction at each step. Unlike rule-based AI tools that automate specific actions, agentic AI pursues a goal, such as turning raw multi-cam footage into a rough cut ready for an NLE.

Q: How is agentic AI different from regular AI video editing tools?

A: Most AI video editing tools follow fixed rules: remove silence above a threshold, apply a preset, and generate captions. Agentic AI makes contextual decisions: it analyses footage, detects speakers, builds narrative structure, and hands off a complete timeline. The difference is between automating a single action and automating an entire workflow.

Q: Is Selects an agentic video editing tool?

A: Yes. Selects by Cutback is built around agentic pre-editing. It ingests raw footage, transcribes and analyses content, builds a stringout, removes silences and filler words, and exports a structured timeline directly to Premiere Pro, Final Cut Pro, or DaVinci Resolve. The prep stage happens autonomously, without manual direction at each step.

Q: What is an AI pre-editing tool?

A: An AI pre-editing tool handles the prep stage of video editing autonomously; syncing footage, transcribing audio, removing silences and filler words, organizing content into chapters, and exporting a structured timeline to an NLE like Premiere Pro, Final Cut, or DaVinci Resolve. Selects by Cutback is built specifically for this layer of the workflow.

Kay Sesoko

Marketer

Share post